AI is moving fast. Really fast. Organizations are shipping AI-powered features at a pace that would’ve seemed impossible just two years ago. Developers are embedding large language models into customer-facing applications, building retrieval-augmented generation systems, and deploying AI agents that make real business decisions.

But speed without safety creates problems. As AI systems become more capable and more embedded in business processes, the risks multiply. Data breaches through prompt injection. Sensitive information leaking through AI responses. Models making biased decisions that harm customers. Compliance violations that rack up fines.

That’s where AI TRiSM comes in. Short for AI Trust, Risk and Security Management, this framework from Gartner helps organizations build AI systems that are powerful and trustworthy. Think of it as the safety net that lets you innovate with confidence because you’ve got the right guardrails in place.

What is AI TRiSM?

AI TRiSM is a framework that, in Gartner’s words, ensures governance, trustworthiness, fairness, reliability, robustness, efficacy, and data protection throughout the AI lifecycle. Gartner introduced this framework to address the unique challenges that AI implementations present—challenges that traditional approaches simply weren’t designed to handle.

At its core, AI TRiSM gives you systematic ways to build trust, risk, and security management into deployments from the ground up. It’s about building confidence that your AI models will do what they’re supposed to do, the way they’re supposed to do it. This approach recognizes that managing AI-related risks requires attention across technical, operational, and governance dimensions for AI models and applications alike.

Who Invented AI TRiSM?

Gartner developed the AI TRiSM framework, first presenting it as one of their Top Strategic Technology Trends for 2023 (published in October 2022) as organizations began rapidly adopting generative AI technologies. The research firm recognized that the explosive growth of AI systems—particularly generative AI models—created a new category of risks that existing frameworks couldn’t fully address. Gartner AI TRiSM emerged from this need, providing a structured way to think about trust, risk, and security management specifically for artificial intelligence.

Why AI TRiSM Matters Now

Traditional security frameworks were built for a world where applications followed predictable patterns. You could audit code, test for known vulnerabilities, and set clear boundaries around what implementations could and couldn’t do. These AI technologies are different. They learn from data, generate novel outputs, and make decisions in ways that can be hard to predict or explain. This creates new categories of risk that need new approaches to risk management.

According to Gartner, organizations that operationalize AI transparency, trust and security are projected to see their AI models achieve improvements of up to 50% in terms of adoption, business goals, and user acceptance by 2026. That’s not just about avoiding problems; it’s about moving faster because you’ve built the right foundation for effective risk management.

The shift toward AI technologies also means that security teams need new tools and techniques. Traditional application security testing wasn’t designed to catch prompt injection or test for data leakage through LLM context windows. Organizations need approaches that understand how these AI technologies and AI models actually work and the unique ways they can be compromised or misused.

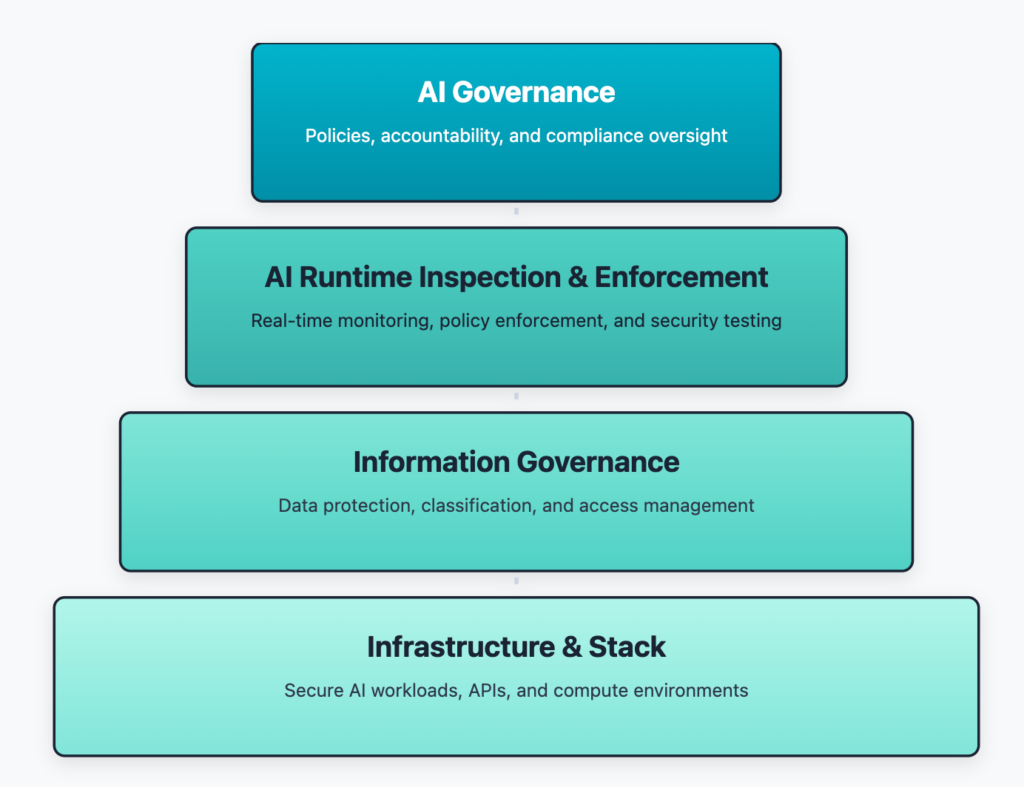

The 4 Pillars of AI TRiSM

Gartner’s AI TRiSM framework is commonly implemented across four functional layers, each addressing different aspects of trust, risk and security management. These layers work together to protect AI systems throughout their lifecycle.

Pillar 1: AI Governance

Governance forms the foundation of the AI TRiSM framework. This layer ensures that implementations align with enterprise policies, regulatory requirements, and ethical guidelines. It’s about establishing clear accountability for how these technologies are used across the organization and making sure every implementation follows the rules.

Key components include:

- AI Catalog Management: Maintaining an inventory of all entities in the organization, including models, agents, and applications. You can’t govern what you can’t see.

- Model Validation: Continuous evaluation to ensure performance remains reliable and doesn’t degrade over time. This includes testing for accuracy, bias, and fairness.

- Compliance Monitoring: Tracking how implementations operate against regulatory requirements and internal policies. As regulations evolve globally, this matters more every day.

- Responsible AI Practices: Implementing frameworks that ensure systems are developed and deployed ethically, with consideration for fairness, transparency, and accountability.

This foundational layer establishes the policies and procedures that guide all initiatives within an organization. It’s what ensures adoption happens in a controlled, responsible way.

Pillar 2: AI Runtime Inspection and Enforcement

While governance sets the policies, runtime inspection ensures those policies are actually enforced when AI systems are operating. This pillar focuses on real-time monitoring and intervention during interactions with AI systems.

Runtime inspection and enforcement includes:

- Real-Time Monitoring: Tracking behavior as it happens, watching for anomalies, unexpected outputs, or policy violations. This is where you catch problems before they impact users or business operations with AI systems.

- Content Anomaly Detection: Identifying when AI systems generate inappropriate, inaccurate, or potentially harmful outputs. This becomes especially important with generative AI systems that can produce unpredictable results.

- Adversarial Attack Resistance: Protecting AI systems from manipulation through prompt injection, model poisoning, and other attacks specifically targeting these implementations. These are threats that traditional security tools weren’t designed to handle against AI systems.

- Application Security: Securing the interfaces and integration points where AI systems connect with other applications, APIs, and data sources. This includes protecting against the OWASP LLM Top 10 vulnerabilities that target AI-powered applications.

This layer is where AI TRiSM gets practical. Good policies aren’t enough. You need mechanisms that enforce those policies automatically as AI systems operate. Runtime inspection provides continuous oversight to ensure AI systems behave as expected, even when processing millions of requests. Beyond security enforcement, runtime inspection in AI TRiSM also includes monitoring for model drift, explainability signals, and policy adherence.

Pillar 3: Information Governance

The quality and security of data determine trustworthiness. Information governance ensures that data used throughout the lifecycle is properly secured, classified, and accessed according to policy.

This pillar addresses:

- Data Protection: Implementing security measures to prevent sensitive data from being exposed. This includes encryption, access controls, and data loss prevention specifically adapted for these use cases. Effective data protection prevents scenarios where implementations inadvertently expose customer PII or proprietary information.

- Data Classification: Properly identifying and labeling sensitive data so systems know what information requires special handling. This is fundamental to data protection across all AI technologies.

- Training Data Security: Ensuring the data used to train or fine-tune implementations is properly sourced, validated, and secured. Compromised training data can lead to biased or unreliable outcomes.

- Context Preservation: Maintaining appropriate data lineage and context so organizations understand how data flows through these implementations. This supports both data protection requirements and compliance needs.

Information governance recognizes that data privacy and data protection are foundational to trustworthy deployments. If systems can’t be trusted to handle data appropriately, they can’t be trusted at all.

Pillar 4: Infrastructure and Stack

The infrastructure layer ensures that AI systems run in secure, compliant, and resilient environments. This pillar addresses the technical foundation that supports all deployments of AI systems.

Key considerations include:

- Secure AI Workloads: Protecting the compute environments where AI systems run, whether that’s cloud-based, on-premises, or hybrid infrastructure supporting AI systems.

- API Security: Securing the interfaces through which applications interact with AI systems. As capabilities are increasingly exposed through APIs, these integration points need protection.

- Multi-Cloud Support: Ensuring controls work consistently across different infrastructure providers, giving organizations flexibility in how they deploy AI systems.

- Traditional Security Integration: Making sure AI-specific security measures work alongside existing security tools and frameworks. AI security shouldn’t operate in isolation from broader protection strategies.

This foundation layer ensures that all the policy and governance work in the upper layers has a secure technical platform to operate on. Without solid infrastructure security for AI systems, even the best policies won’t prevent security incidents involving AI systems.

The Growing Importance of AI TRiSM in Application Protection

As artificial intelligence reshapes how applications are built, the AI TRiSM framework matters more than ever for application security. These capabilities aren’t separate features that sit alongside applications. They’re deeply integrated into application logic, touching sensitive data, making business decisions, and interacting with customers through AI systems.

When Application Security Meets AI Risks

Traditional application security testing wasn’t designed for AI-powered applications and AI systems. Static analysis can’t detect prompt injection vulnerabilities. Legacy dynamic testing tools don’t understand how to manipulate responses or test for data leakage through context windows in AI systems. This creates gaps that attackers are already starting to exploit in AI systems.

Organizations implementing robust AI governance recognize that risk management must extend beyond traditional vulnerabilities. You need to test for AI-specific risks like prompt injection, sensitive information disclosure, and improper output handling—the kinds of vulnerabilities outlined in the OWASP LLM Top 10. This is where runtime testing matters most for trust, risk and security management of AI models.

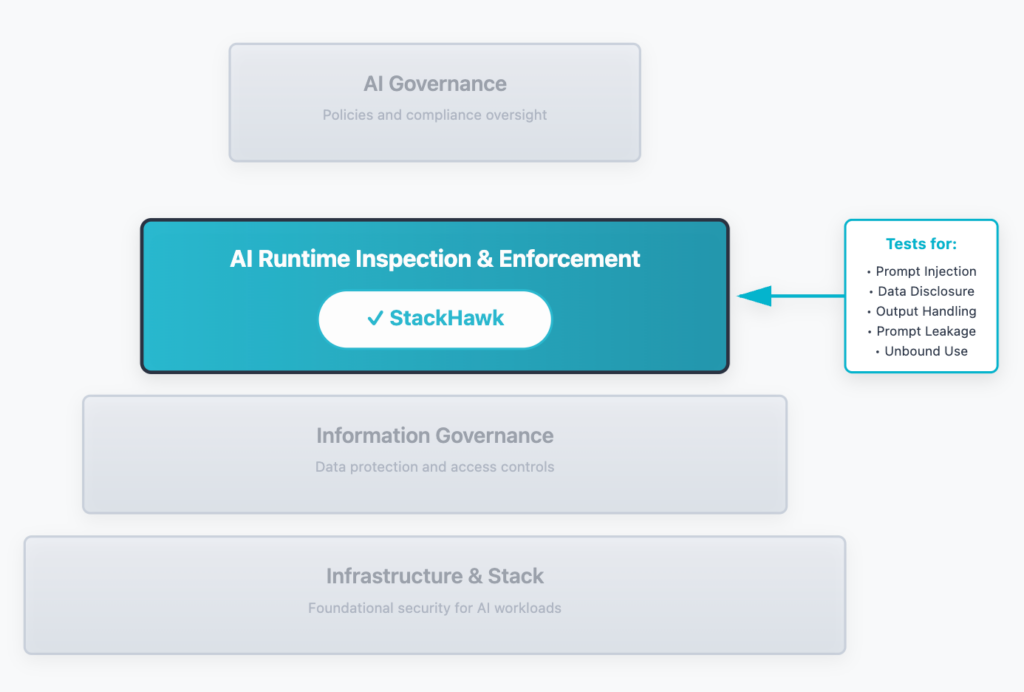

How StackHawk Supports AI TRiSM Implementation

StackHawk’s LLM security testing capabilities address key security-focused aspects of the AI runtime inspection pillar of the AI TRiSM framework. While many organizations struggle to find practical ways to implement AI TRiSM techniques, StackHawk provides a concrete solution that fits naturally into existing development workflows.

Here’s how StackHawk supports implementing AI TRiSM:

Runtime Testing for AI Risks: StackHawk tests applications in their actual runtime environment, the same way attackers would target them. This catches risks that only appear when applications are running, not sitting in a code repository. The platform identifies five critical vulnerabilities from the OWASP LLM Top 10:

- LLM01: Prompt Injection—detecting when attackers can manipulate through crafted inputs

- LLM02: Sensitive Data Disclosure—finding when implementations leak confidential information

- LLM05: Improper Output Handling—catching unvalidated outputs used in dangerous ways

- LLM07: System Prompt Leakage—identifying exposed system instructions that guide behavior

- LLM10: Unbound Consumption—detecting missing rate limits that allow resource exhaustion

Native Integration with Development Workflows: Implementing AI TRiSM models effectively means building protection into the development process, not bolting it on afterward. StackHawk runs as part of CI/CD pipelines, testing every code change before it reaches production. Developers get immediate feedback on vulnerabilities in the same tools they already use for other security findings.

Continuous Monitoring Across the AI Lifecycle: AI TRiSM techniques emphasize continuous evaluation. StackHawk provides ongoing runtime testing that ensures protection doesn’t degrade as applications evolve. Every deployment gets tested, maintaining a consistent posture as capabilities change.

Developer-Focused Remediation: Finding vulnerabilities is only valuable if developers can fix them. StackHawk provides detailed reproduction steps and fix guidance for every finding, helping developers learn secure implementation practices. This educational component supports the broader goals of building security knowledge across development teams.

Here’s what works: AI TRiSM frameworks deliver better results when they’re part of normal application security programs, not separate, siloed efforts. Organizations already doing application security testing can extend their programs to cover risks using the same tools and workflows they’ve already established.

Implementing AI TRiSM: Practical Considerations

Understanding the framework is one thing. Actually implementing AI TRiSM in your organization is another. Let’s look at practical steps for getting started with trust, risk, and security management.

Start with Visibility

You can’t manage risks you don’t know about. Many organizations discover they have more implementations than they realized—developers experimenting with new capabilities, shadow tools that bypass IT, third-party applications with embedded features. The first step involves establishing an AI catalog that tracks all deployments in use.

This inventory should include:

- Technologies being used (both internal and third-party)

- Applications integrating new capabilities

- Data sources feeding these implementations

- Integration points where these connect to other services

Establish Clear Governance Policies

Effective trust risk and security management starts with defining what “responsible AI” means for your organization. This includes policies around:

- Who can deploy new implementations and under what conditions

- What data can be accessed

- How decisions should be explained and audited

- Requirements for testing before deployment

- Ongoing monitoring and evaluation procedures

These policies should align with your organization’s broader risk management approach and regulatory obligations. What’s appropriate for a financial services firm will differ from what makes sense for a software startup.

Build Security Into Development

Rather than treating protection as a separate concern, integrate it into existing development practices. This means:

- Running security tests as part of standard CI/CD pipelines

- Training developers on specific security risks

- Establishing secure coding guidelines for integrations

- Making security findings visible in the same tools developers use for other vulnerabilities

When trust risk and security management becomes part of the normal development flow, it’s more likely to happen consistently rather than being treated as a special case that slows things down.

Implement Runtime Monitoring

Static analysis and code review can’t catch everything. You need runtime testing that evaluates how implementations actually behave. This includes:

- Testing for prompt injection and other attacks

- Monitoring outputs for data leakage or inappropriate content

- Validating that rate limits and resource controls are working

- Checking that implementations respect access controls and data permissions

Runtime inspection should happen both during development (in pre-production environments) and continuously in production to catch issues as implementations evolve.

Focus on Continuous Improvement

AI TRiSM is an ongoing process. These technologies continue to evolve rapidly, new risks emerge, and regulatory requirements change. Organizations need mechanisms for:

- Regularly evaluating performance for degradation

- Updating security tests as new attack patterns emerge

- Reviewing and updating policies

- Learning from security incidents and near-misses

The goal is to create a learning organization that improves its risk management over time, not just checking compliance boxes.

Common Challenges in AI TRiSM Adoption

While the benefits of AI TRiSM are clear, organizations face real challenges in implementation:

Complexity: Modern implementations involve multiple components, complex data pipelines, and integration across numerous services. This complexity makes governance challenging. Organizations need tools that can handle this without requiring deep expertise from every team member.

Speed vs. Safety Trade-offs: Development teams are under pressure to ship features fast. They may see big frameworks as slowing them down. The solution is making security and governance so seamless that they don’t create friction in development workflows.

Skills Gaps: Many organizations lack deep expertise in both development and protection. They need approaches that help teams learn best practices while building, not requiring everyone to become experts first.

Tool Sprawl: As the market expands, there’s a risk of accumulating too many specialized tools that don’t integrate well. Organizations benefit from finding solutions that address multiple aspects within their existing stack.

Keeping Pace with AI Evolution: Capabilities and attack vectors evolve rapidly. What works for securing current implementations might not address next year’s capabilities. Organizations need approaches that can adapt as technology changes.

The most successful implementations address these challenges by starting small, focusing on high-risk use cases first, and gradually expanding coverage as teams build expertise and confidence.

The Path Towards Building Trust in AI Systems

AI TRiSM represents a fundamental shift in how organizations approach deployment. Instead of moving fast and hoping for the best, leading organizations are building AI TRiSM frameworks that let them innovate confidently because they know that trust, risk, and security concerns are being managed.

The organizations seeing the most success with AI TRiSM share common characteristics:

- They treat protection as part of application security, not a separate domain

- They build AI TRiSM controls into development workflows from the start

- They focus on practical AI TRiSM implementation that developers can actually use

- They keep evaluating and improving their AI TRiSM practices as capabilities evolve

As AI models become more powerful and more embedded in business operations, getting security right matters more than ever. AI TRiSM provides the framework for managing those stakes and ensures that as organizations push the boundaries of what these AI models can do, they’re doing it responsibly.

For organizations already thinking about protecting AI implementations, the message is clear: the best time to implement AI TRiSM was when you started deploying AI models. The second-best time is now. Waiting for perfect clarity on regulations or for technology to stabilize means accumulating security debt that becomes harder to address over time.

Getting Started with AI TRiSM and StackHawk

If you’re ready to start implementing AI TRiSM in your organization, here’s a practical roadmap:

- Assess Your Current State: Inventory your implementations, evaluate existing policies, and identify gaps in coverage. Understanding where you are helps prioritize implementing AI TRiSM efforts.

- Define Your Framework: Establish clear policies for development, deployment, and monitoring that align with your organization’s risk tolerance and regulatory requirements. This foundational work supports all implementing AI TRiSM activities.

- Implement Runtime Testing: Start testing AI-powered applications for vulnerabilities in the OWASP LLM Top 10. Tools like StackHawk can help you find and fix issues before they reach production, making implementing AI TRiSM more practical.

- Build Continuous Monitoring: Establish processes for ongoing evaluation, including performance monitoring, security testing, and compliance verification. These AI TRiSM practices ensure long-term effectiveness.

- Expand and Iterate: Start with high-risk implementations and gradually expand coverage. Learn from each implementation to improve your AI TRiSM practices over time.

The journey to full AI TRiSM implementation takes time, but every step makes your implementations more trustworthy and your organization more confident in adoption.

Organizations successfully navigating the challenges are those that embrace frameworks like AI TRiSM while finding practical ways to implement them. By combining solid AI TRiSM principles with effective tools and continuous monitoring, they’re building implementations that deliver business value without compromising trust or safety.

Ready to test your AI-powered applications against the OWASP LLM Top 10? Learn more about StackHawk’s LLM security testing capabilities and how they support your AI TRiSM implementation. You can also explore StackHawk’s documentation on LLM security testing and sign up for a free StackHawk trial to get started.