Integrate StackHawk into your AWS Pipeline

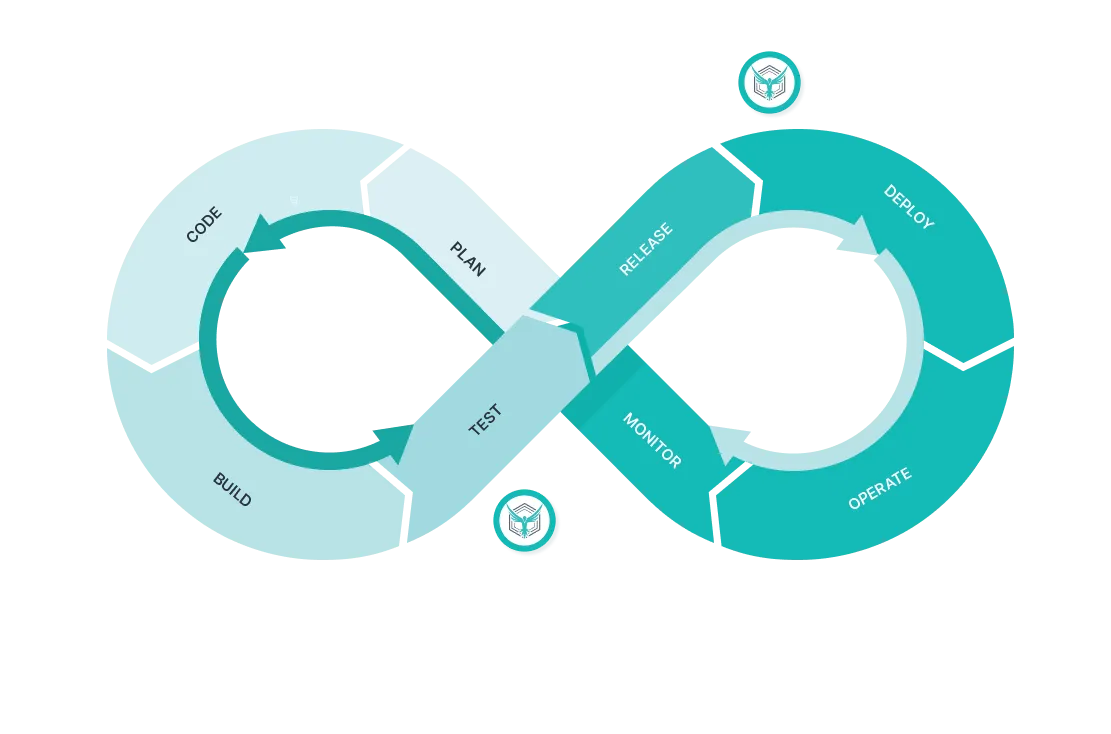

Application security has shifted left and developers need a tool for reviewing and fixing security findings. StackHawk can run as part of your CI/CD pipeline with AWS CodeBuild and AWS CodePipeline to automate security testing as part of your software delivery.

Available on the AWS Marketplace

StackHawk is available to purchase via the AWS Marketplace to make it even easier for development teams to purchase and deploy StackHawk via their existing AWS Cloud account.

Learn how StackHawk and Snyk work together to find and fix vulnerabilities in their AWS workloads.

Interested in seeing StackHawk at work?

Schedule time with our team for a live demo.